AI and Computing Education

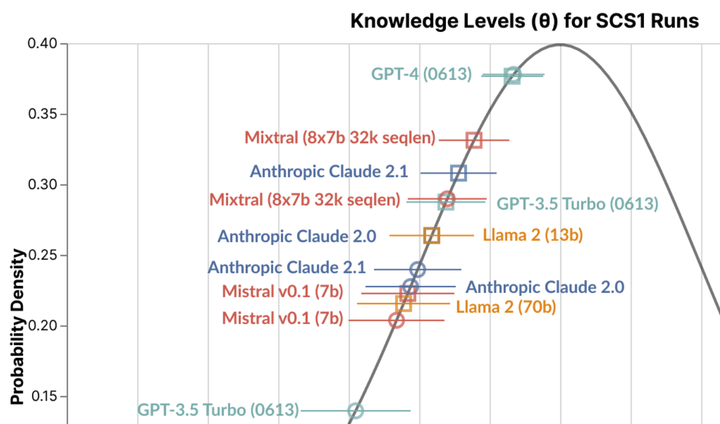

Benchmarking 10 LLMs on a CS concept inventory identified that their responses reflected below average introductory computing students (from ICER 2024 paper).

Benchmarking 10 LLMs on a CS concept inventory identified that their responses reflected below average introductory computing students (from ICER 2024 paper).This research seeks to explore how generative AI tools change what is worth learning about computing, how we teach about computing and AI, and how we assess knowledge. The long-term goal is to develop instruction materials, assessments, and tools to support students critically considering how AI tools augment their skills and capabilities.

Project Publications

ICER 2024: Used automated benchmarking infrastructure and expert review to understand differences in LLM and student performance on CS assessments with validity evidence.

EAAI 2024: Conducted curricular co-design with cross-disciplinary high school teachers to identify understand teachers’ perspectives on AI, how to integrate learning about AI with disciplinary learning, and how to consider limitations as pedagogical opportunities.

EAAI 2024b: Advised students who designed Prompty, a tool that scaffolded prompt engineering and output comparison for high school language arts students.

Constructionism 2023: Panel to discuss how AI changes constructionism approaches to teaching and learning.

CHI AI Literacy Workshop 2023: Workshop position paper advocating for a plurality of AI literacies.

Talks

- Kapor Center 2024: Presented on ongoing research related to critically learning with and about AI.

Funding

This research is funded by a seed grant from the Stanford Accelerator for Learning, Human-Centered AI Institute (HAI), and OpenAI.