Critical Interpretations of Data

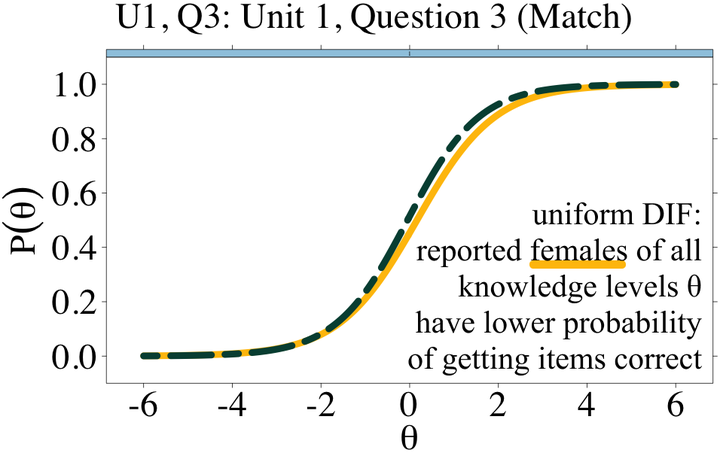

Gender-Based Test bias I found in a CS assessments used by thousands of students a year.

Gender-Based Test bias I found in a CS assessments used by thousands of students a year.Data can measure the existence and extent of disparities and biases, but judgement is required to interpret and act on data-related findings. This research thread investigates designing critical and equitable human-data interactions that enable stakeholders (e.g. instructors, curriculum designers) to connect their domain expertise with their interpretations of data for equity-oriented outcomes. The long-term goal of this research is to design interactions that contextualize data for situated interpretation and equity-oriented actions.

Project Publications

ICER 2022: I conducted a critical demography of computing education research to identify and critique trends in how researchers collect, analyze, report, and use demographic data.

Dissertation 2022: My dissertation defined a framework for equity-oriented interpretations of data by considering domain experts’ cultural comptence, relevant prior knowledge, and perceptions of power relationships.

PACMHCI/CSCW 2022: I designed and evaluated StudentAmp, a student feedback tool which contextualized feedback with intersectional demographic data to enable teaching teams of large remote computing courses to take equity-oriented action during the COVID-19 pandemic.

L@S 2021: I partnered with curriculum designers from Code.org to address psychometric data on assessment bias by gender and race.

SIGCSE 2019: Demonstrated the use of psychometric methods from Item Response Theory to the computing education community by analyzing results from a CS concept inventory.

Funding

This research has received funding and support from the National Science Foundation, Google Cloud, and nonprofit Code.org.